A year ago, most infrastructure teams were still debating whether AI belonged in their product at all. Now, nearly halfway through 2026, in addition to asking “how do we support agents?” the industry is asking “what happens when agents touch money?”

We started building for this before the questions were widely asked. Not because we predicted a specific outcome, but because the signals were clear enough that we had to pick a direction and learn. Here’s what we learned, what it changed, and the gaps we’re working to close.

Two experiments in agentic infrastructure

About a year ago, we took two distinct approaches simultaneously when we launched the Fireblocks AI Suite. One was native: Genie, an AI interface built directly into the Fireblocks platform. The other was a remote integration: an MCP server that lets external agents to Fireblocks and expand their intelligence surfaces.

Both started restrictive on purpose. When the AI infrastructure you’re building touches institutions, the security layer has to precede the capability layer. This is an architectural requirement. We needed to understand how agent-driven workflows behave at production scale before we could reasonably extend permissions.

Six months of production usage revealed new AI use cases to help power institutional finance.

What users actually did with the tools we gave them

Fireblocks AI Link, our MCP server integration, was built to help customers bridge their workflows using AI and natural language processing, extending their usage of the Fireblocks platform to perform intelligent analysis of their workspace data.

Genie launched as a treasury operations tool, but we discovered that the way teams actually used it was broader than that. Within months, users were pushing it toward investigating policy configurations, pulling context from the help center, surfacing answers to support questions. The pattern was consistent: teams didn’t want a chatbot sitting next to the platform. They wanted an operational layer that could reason across it.

Two categories of capabilities emerged:

- Agentic accounting and treasury management, where agents handle AI-driven financial operations and the compliance and security configurations around them.

- Agentic payments infrastructure, or more specifically, the ability to send and receive money through agents using emerging protocols like x402 and MPP.

Both categories require the agent to own operations end-to-end and engage across the entire transaction flow.

This is what the customer was actually trying to solve. Not AI features for their own sake, but operational delegation with the same policy enforcement that governs human operators.

The constraint that forced a specific product decision

The moment agents touch transactions, a new attack surface opens. This is a reality that has to be addressed at the product layer before the capability is useful.

We faced a real tradeoff: move fast on capability expansion, or hold until security controls existed that weren’t built for agents yet. We chose to move on an opt-in basis, deliberately. Specifically within the elements of controlled agentic session duration, explicit opt-in for higher-risk operations, and updated security guidelines written for agent interaction patterns rather than human ones.

At production scale, the “let the agent do everything” model breaks for a specific reason: agents don’t have the contextual judgment to know when a policy exception is warranted versus when it’s a hallucination driving an edge case. The governance layer has to compensate for what the agent can’t reason about. That’s the design constraint that shapes every product decision we’ve made in this space.

Why neither approach is sufficient

Running both paths in parallel for six months gave us something most teams don’t have: insights into user behaviour that articulated the difference in utility between native AI and external agent integration. They aren’t competing approaches. They solve different parts of the same problem.

The native experience gives institutions immediate operational value while Fireblocks retains full UX control. Teams that want AI-driven treasury management or policy investigation without building integrations get that out of the box. The tradeoff, though, is higher development and token costs for Fireblocks, and the ongoing overhead of maintaining and updating prioritary models as the underlying technology moves at machine speed.

The agentic infrastructure approach gives massive scalability with ecosystem reach. Teams that have already invested in their own AI stack, or that need agents to interact with Fireblocks as part of a larger workflow, can connect directly and bring their own intelligence. The tradeoff here is that there are more surface areas, which means more security and hallucination risks, less control over how Fireblocks shows up in the experience, and integration friction that has to be managed.

Neither approach handles everything a user requires, and we realised the true answer is a hybrid approach. We’re exposing Genie as both an API and an MCP tool to give agents the freedom to either:

- Access the platform via low-level API tools mapped to the platform building blocks with policy and security controls intact

- Leverage Genie’s AI-powered reasoning power for orchestrating more complex queries and operations, with the reliability and guardrails embedded directly

The trust layer doesn’t change depending on which path the agent takes. That was the design constraint from the beginning.

Coding agents changed what AI infrastructure solutions need to deliver

There’s a parallel shift most infrastructure providers haven’t fully accounted for. Engineering teams aren’t just using AI to write code. They’re using coding agents to build automated production-ready workflows, custom integrations, and operational tooling that runs without a developer in the loop. Claude Code is an obvious example, but the category is broader than any single tool.

This changes what “developer-friendly” means for AI infrastructure solutions. It’s no longer sufficient to have a good SDK and clear documentation. If your developer docs aren’t structured for an agent to parse and act on, your platform is invisible to the fastest-growing class of builders in the market right now.

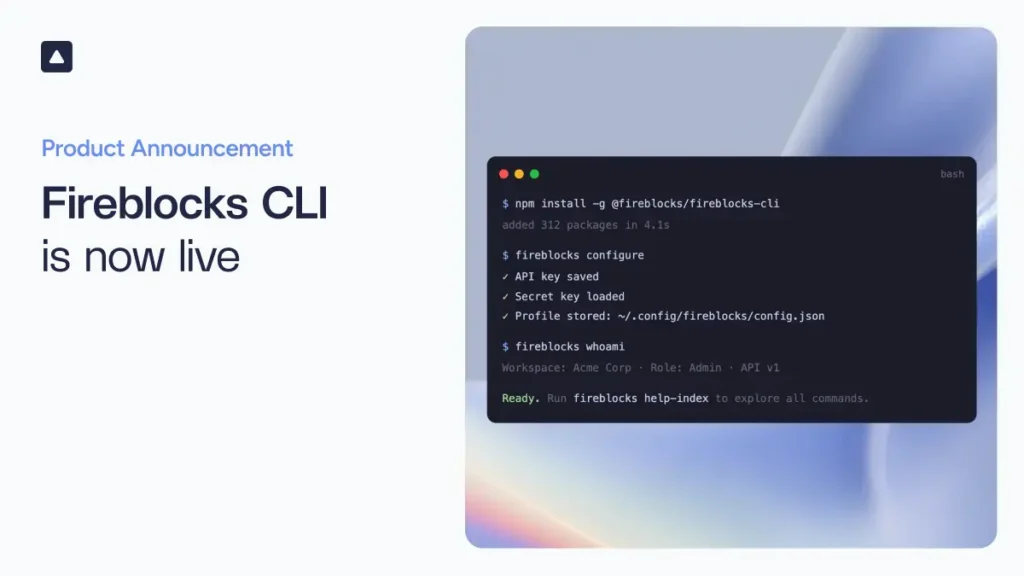

We responded to this by shipping the Fireblocks CLI and restructuring our developer documentation to be LLM-readable. The documentation work is honestly less glamorous than any product launch, but it matters more than it sounds. A coding agent that can’t navigate your API reference is an agent that will route around your platform rather than through it.

The vision: agents as a first-class user persona

The way most platforms are built, including how Fireblocks was built, assumes that humans are the operator. Agents are their own principle operator, with their own identity and ways of working. That means that the jobs to be done for an agent need to be treated differently. Agents are their own user persona that platforms need to design for.

Treating agents as a user persona means three things in practice.

- The entire operational lifecycle has to work headless. We already run quality assurance with agentic workflows in scope. Policy configurations have to be interpretable by agents, not just enforceable against them. Agents need to be able to take more autonomy for routine AI-driven financial operations while keeping humans in the loop where the stakes are high. The governance layer has to interact with agents in a format they can reason with,ie, a mix of predictive mechanisms and policy controls, not just a block from things agents don’t recognize.

- The trust network is extending to agents. The Fireblocks Network has connected institutions and counterparties since 2019. The next version of that network enables users and their agents to discover, connect with, and delegate work to other agentic services, with compliance and security enforced at the network layer. Agent-to-agent trust is a different problem than institution-to-institution trust, and most of the infrastructure for it doesn’t exist yet. While the pieces we shipped over the last year were foundational, there are core capabilities tied to agent identity and trust at the infrastructure level that we’ll be putting into production soon.

- Agentic commerce has to run on infrastructure that treats agent-to-agent payments as a native transaction type. Protocols for agents finding and paying for services are emerging fast (more on the x402 side of this to come). The settlement infrastructure underneath those protocols is what determines whether they work at institutional scale, meaning compliance, auditability, and policy enforcement need to be baked into every transaction.

Check out the recent report from Dynamic, a Fireblocks company, on agentic finance for a deeper dive, or contact our team to learn more about Fireblocks agentic digital asset infrastructure.